[lmt-post-modified-info] In the United States, June 1st marks the beginning of hurricane season. With everything else 2020 has given us, we hoped for a reprieve from at least one bad thing this year, however NOAA is predicting a busy season with an above-normal number...

Disaster Studies

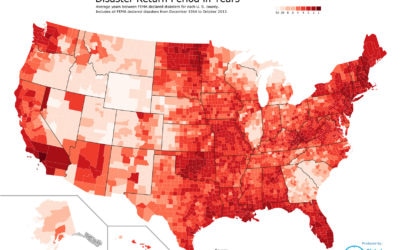

FEMA Disaster Map

Return Period in Years for FEMA Declared Disasters by County This map produced by Global Data Vault, shows the average number of years between FEMA declared Disasters for each county in the United States. One can surmise that the darker density counties are at higher...

California Wildfire Occurrences Highest in October

Wildfires have taken a top spot in the news as of late. They cost American taxpayers between $20 billion and $100 billion every year, in expenses that range from air pollution and soil degradation to public health challenges and loss of human life. If it...

Predicting Hurricanes

Damage Caused by Hurricanes in the US Even though hurricanes mainly threaten the US Gulf and East Coasts, of all the natural disasters that happen in America, they are the most costly and destructive. Hurricanes can strike anywhere from Southern Texas to New England,...

A Tornado’s Costly Path

Recent events remind us that tornados often have a sad and deadly cost for the victims in their path. Even an F-3 tornado can cause devastation and the total destruction of everything in its path. Homes are reduced to matchsticks, office buildings to piles of rubble....

Information Destruction Through History

Loss of Knowledge Information the most valuable commodity in the world. All human progress depends on the accumulation and preservation of information. When information is lost, human progress suffers. This infographic displays some of the most significant losses of...

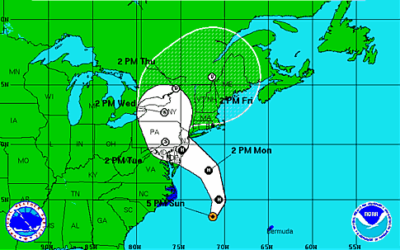

What went wrong in the days before Super Storm Sandy?

“Not only were many firms unsure about whether their galoshes were waterproof,” Bart Chilton, the regulator at the Commodity Futures Trading Commission said, “they hadn’t even tried them on.” Chilton’s sharp-tongued critique is in response to the apparent lack of...

Data Center Exposure and Recovery in New York City

Hurricane Sandy provided a fascinating opportunity to study the both the level of disaster planning and the resilience of New York City data centers. This article will examine a) what actually happened, b) what was the risk, and c) what are the lessons learned. What...

Hurricane Sandy Update

Hurricane Sandy Update Hurricane Sandy Update. Global Data Vault continues to support our customers in the affected areas especially New York and New Jersey. We have been busier than usual, but all of our customer recoveries have gone very well. We wish all of our...

Hurricane Sandy’s Reach

The devastation from Hurricane Sandy is widespread, even to areas of the country not in the storm zone. While the effects are widespread, we want to assure our customers that NONE of our production systems were affected by Hurricane Sandy’s wrath on the east coast....